This article is part of the Micro AI series.

This morning I ran a database test. 54 files generated. By hand, with our existing tooling, that’s a couple of hours of work. With Claude Code, it took less than a minute.

Then I ran a bigger one. 582 files. By hand, several days for a senior DBA. By lunch, all of it tested end to end across two environments.

I want to walk through what actually happened, because the numbers are only half the story.

The problem this workflow solves

Before any of the numbers make sense, the context.

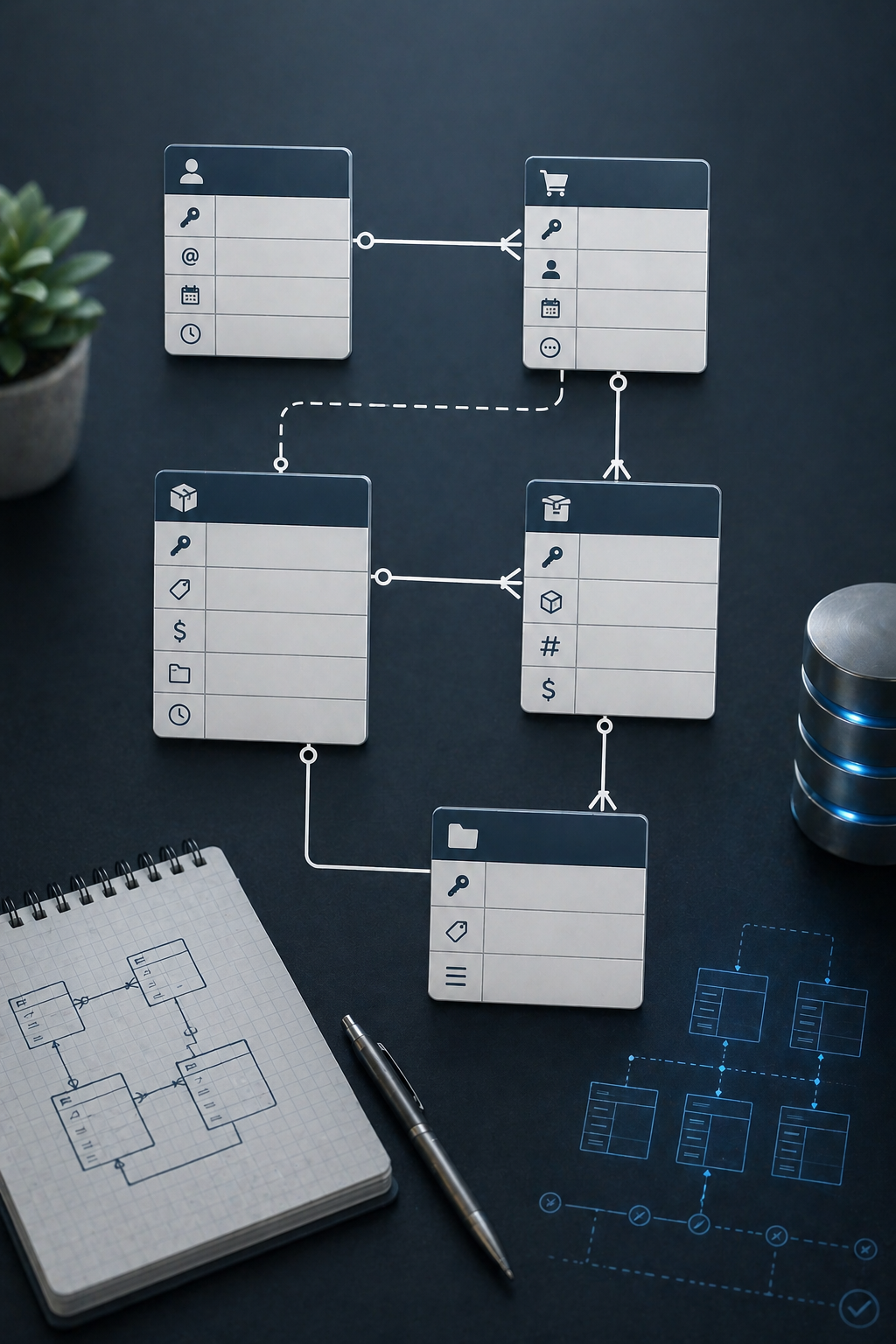

We run three environments: dev, UAT and production. For a long time, schema and reference data drifted between them because changes were being made directly in individual databases, without tickets, without a paper trail. Developers worked off snapshots. A snapshot captures a moment. A database keeps changing. SQL that was valid when the snapshot was taken would break weeks later against a different shape of database.

The pattern that followed was predictable. Something breaks in UAT or prod, everyone points at the SQL, and the conversation becomes “your quality sucks.” It wasn’t a quality problem. It was a coordination problem dressed up as one.

The fix was Flyway, paired with a ticket-driven process. Every database change is a migration. Every migration is tied to a ticket. Nothing lands in any environment without going through the pipeline.

On top of that we added a pattern for changes that need to be gated. Inserts go in disabled, with a matching enable script and disable script sitting alongside. At the moment we need the change active, we run the enable. If we need to back it out, we run the disable. The decision about whether a change is live is separated from the decision about whether the change exists. The inserts deploy as a unit. The activation is controlled independently.

Every insert in this workflow ships with its siblings:

- I, the insert, deployed disabled

- E, the enable script, run when we want the change active

- D, the disable script, run when we want to turn it off

Two environments (dev and UAT) times three files per insert is where the file counts explode. 54 inserts in the first test. 97 inserts in the second. That’s how you get 582 files from a morning’s worth of ticket work.

Why the batches get big

There’s one more piece of context worth naming.

The database this workflow manages holds configuration rows for multiple languages and regional settings. Most database work is driven from inside the organization, one or two rows at a time, small tickets, steady pace. That’s the easy part.

The hard part is the work driven from outside. Regulatory changes. Tax law updates. A new policy in a country you operate in. When a government passes a rule that affects how the product behaves in a region, the change isn’t optional and the scope isn’t negotiable. If 60 configuration rows have to move to comply, 60 rows have to move. If the deadline is three weeks out, three weeks is what you have.

Those are the days that used to hurt. Onesies and twosies are a solved problem. Sixty-row policy-driven changes were where the existing tooling started to strain. Every row still needed its insert, its enable, its disable, across every environment. The work multiplied fast, the deadline didn’t, and the senior DBA was the bottleneck.

This is the shape of work where collapsing generation from days to minutes isn’t a productivity win. It’s a survivability win.

The morning

Test one started at around 10:00. 54 files, generated in under a minute. I ran Flyway against dev and UAT in parallel. The whole cycle, including a teardown back to baseline so I could test again, was done in a couple of minutes.

Test two started at 10:40. 98 insert statements this time, expanding to 582 files across the I, E and D pattern in both environments.

The first pass came back wrong. The SQL didn’t conform to our existing standards, standards I hadn’t given Claude. That’s on me, not the tool. I corrected it, regenerated and was ready to run Flyway at 10:53. Thirteen minutes from “go” to “ready to deploy 582 files.”

Then Flyway aborted. A description field in one of the inserts overran the actual column size. Another rule that lived in someone’s head and not in any document. Corrected, regenerated, re-ran.

The parallel dev plus UAT migration took under two minutes. The full enable/disable test cycle, every script exercised in both directions across both environments, finished at 11:06. Four minutes.

By lunch, 582 files were generated, validated, deployed and tested.

The corrections matter

This is the beat where a lot of AI tooling articles go quiet. Everything worked perfectly, look at the numbers, subscribe for more.

That’s not the honest version.

I corrected Claude twice in one morning. Both corrections were for the same underlying reason: conventions that lived in people’s heads instead of in the repo. SQL formatting standards. Column-length rules. Knowledge that a senior person on the team applies automatically and never thinks about, because they’ve been here long enough to have absorbed it.

Neither correction was a Claude failure. Both were documentation debt made visible.

Here’s the thing about that debt. The old workflow hid it, because the humans doing the work already knew the rules. Claude doesn’t, so the rules have to become explicit. That isn’t a bug. It’s a forcing function.

Two corrections cost me a few minutes. The work the corrections unlocked saved days.

The validation surprise

The thing that actually impressed me wasn’t the speed. It was something quieter.

Part of the test required validation logic. I was deliberately reusing SQL that had already been applied to the database, which meant the validation had to account for existing rows, multiple rows, the realities of a database that isn’t a clean slate. That nuance was in the training material I’d given Claude.

It handled it flawlessly.

Anyone can buy a speedup on generation. Models are good at boilerplate. The harder thing, the thing that determines whether an AI collaborator can carry weight on real database work, is whether it can reason about state. Reuse SQL. Validate against existing data. Handle multi-row cases. That’s not mechanical. That’s the part where a weaker tool produces plausible-looking scripts that quietly corrupt your environment.

The reason it didn’t isn’t magic. It’s that the training material told it how to think about the problem. Once the conventions were written down, the tool could handle the parts of the job that actually require judgment about state, not just the parts that require typing.

What this is actually about

I started writing this as a “look how fast” piece. The numbers earn that framing. 54 files in under a minute. 582 files generated, tested and deployed before lunch. Days of senior DBA work compressed into a morning.

But the speed isn’t the story. The speed is what you get when two other things are already true.

The first is that the team’s actual operating knowledge has to be in a form the AI can work from. Every correction I made this morning was a place where that knowledge lived in a person’s head and not in a document. The team had standards. We had tooling. We had bash scripts. What we didn’t have was the standards enforced end to end, because the enforcement mechanism was “a senior person remembers.”

People remember imperfectly. They have deadlines. They context switch. They follow the standard most of the time and sometimes, under pressure, they don’t. That’s not a failure of the team. That’s just being a person doing a job.

An AI collaborator working at volume can’t absorb that fallibility the same way a senior person does. It needs the rules written down. The places where the rules aren’t written down are exactly the places it course corrects. Each correction is the team paying down a piece of debt it had been carrying without noticing.

The second thing that has to be true is that the existing process has to be worth accelerating. Flyway plus tickets plus the enable/disable pattern is a deliberate, mature solution to a real coordination problem. The article isn’t about AI replacing a broken process. It’s about AI accelerating a solid one. That distinction matters. Pointing AI at a bad process produces fast bad outcomes. Pointing AI at a good process produces a survivability gain on the days when the work spikes.

The interesting question about AI in 2026 isn’t “will the model produce garbage.” Current models are good. They code better than I could have at any point in my career. They surface architectural questions that make my work better. The interesting question has moved.

The interesting question is whether your team’s actual operating knowledge is in a form the AI can work from. If it isn’t, you’ll pay a course-correction tax every time you try to use one. If it is, the AI can take on work that previously required a senior person’s memory, and the days that used to break the team become days that finish before lunch.

That’s the work worth doing. The speedup follows.